One of the significant changes to the CAR 2024 program is the addition of longer and more frequent breaks throughout the event, allowing everyone to connect with speakers and catch up with colleagues. Attendees will also have the chance to find out more about other organizations within the CAR umbrella, like the five Affiliate Societies. With plenty of time each day, there are lots of opportunities to network, socialize, and celebrate excellence in radiology. Read More

News

Expert Speakers and a Wealth of Career Experience at Virtual Trainee Day

Supported by GE HealthCare

If you are early in your radiology career and would like direction in areas of your interest, then the Virtual Trainee Day is a must-attend event. Virtual Trainee Day takes place on Friday, April 5 and features veteran speakers in the field of radiology aiming to share their expertise and experience with trainees. Read More

Network with Colleagues in Your Subspecialty and Meet the Affiliate Societies at CAR 2024!

Representatives from the five CAR Affiliate Societies will be at CAR 2024 and are looking forward to connecting with you! Check out all the CAR Affiliate Societies' upcoming networking opportunities. Read More

Bringing Canadian Medical Expertise to the Height of International Competition

While most of us are excited to watch the upcoming Paris Olympic Games as fans from the comfort of our couches, one CAR member will travel to France to take part in providing care for the world's greatest athletes. Dr. Bruce Forster will make the journey overseas as part of the International Olympic Committee (IOC) medical team. Read More

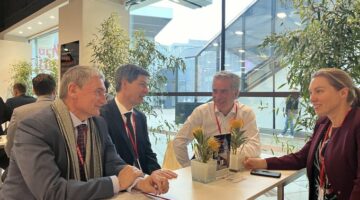

Representing Canada Internationally: ECR 2024 Recap

Canada's radiology community is dedicated and innovative. The European Congress of Radiology (ECR) 2024 offered a platform to demonstrate these qualities by engaging with medical societies and collaborating on an international stage. CAR President Dr. Ania Kielar, Past-President Dr. Gilles Soulez, and CEO Nick Neuheimer attended the event in Vienna, Austria from February 28-March 3. Read More

The First and Only Low-osmolar Iodinated Contrast Media Approved for Oral Use in Canada

Presented by GE HealthCare

Earlier this year, GE HealthCare announced Health Canada's approval of Omnipaque injection for Oral use, making it the first and only low-osmolar iodinated contrast media approved for oral use in Canada. Omnipaque injection for Oral Use is indicated in adults and pediatrics 1. Read More

Sharing Experience and Teaching Negotiation Skills for Women in Radiology

Negotiating is a professional skill that needs to be learned and developed, and while some are great at negotiation, it does not come naturally to most. Earlier in February, the CAR partnered with the University of Toronto (U of T) Department of Medical Imaging to host Women in Radiology: Negotiation Skills, an informative evening dedicated to discussing the importance of negotiation in radiology careers, share stories of personal negotiation experience, and outline steps on how women in radiology can improve this skillset. Read More

Peer Reviewing Offers Rewarding Academic Experience

Academic journals like the Canadian Association of Radiologists Journal (CARJ) and many others would not be possible without the dedicated work of peer reviewers. For CAR member Dr. Anass Benomar, the peer reviewing experience has been a rewarding journey. Read More

CAR 2024 is Your Chance to Get Involved with the Affiliate Societies

The Affiliate Societies are an important part of the CAR and are critical to creating the educational content featured at CAR 2024. These groups are collections of experts who are dedicated to representing their subspecialty and disseminating their expertise. In addition to developing relevant educational sessions each year, the Affiliate Societies take the opportunity to meet CAR members by holding Business Meetings and a Networking Dinner in conjunction with CAR 2024. Read More

Leading the Way in Radiology Research for 75 Years

Canada's first ever medical specialty journal is celebrating a major milestone with its latest published volume. The Canadian Association of Radiologists Journal (CARJ) released the first issue of its 75th volume earlier this month, continuing its leadership in quality and consistency in the field of radiology research. Read More

Interactive and Engaging Education: Register Today for the CAR 2024 Emergency Radiology Workshop

A highlight of the CAR's Annual Scientific Meeting is the educational workshop that provides an in-depth and hands-on approach to learning about specific topics in radiology. This year, CAR 2024 kicks off with a day-long workshop on emergency radiology called Mastering ER Radiology: Accurate Diagnoses and Timely Decision-Making. Experts from across the country will come together to put theory into practice in several areas of emergency radiology including abdominal, musculoskeletal, neuroradiology, and more. Read More

Addressing Challenges, Providing Value for Our Members, and Raising the Profile of Radiology in Canada

The CAR's Outlook for 2024

By Dr. Ania Kielar, CAR President

Coming into a new year, the CAR will continue to seek out new opportunities for advancement and enhancing patient care.

Over the past few years, there have been some significant challenges in radiology and our members have worked tirelessly to advocate for enhancements in healthcare delivery and quality patient care. Read More